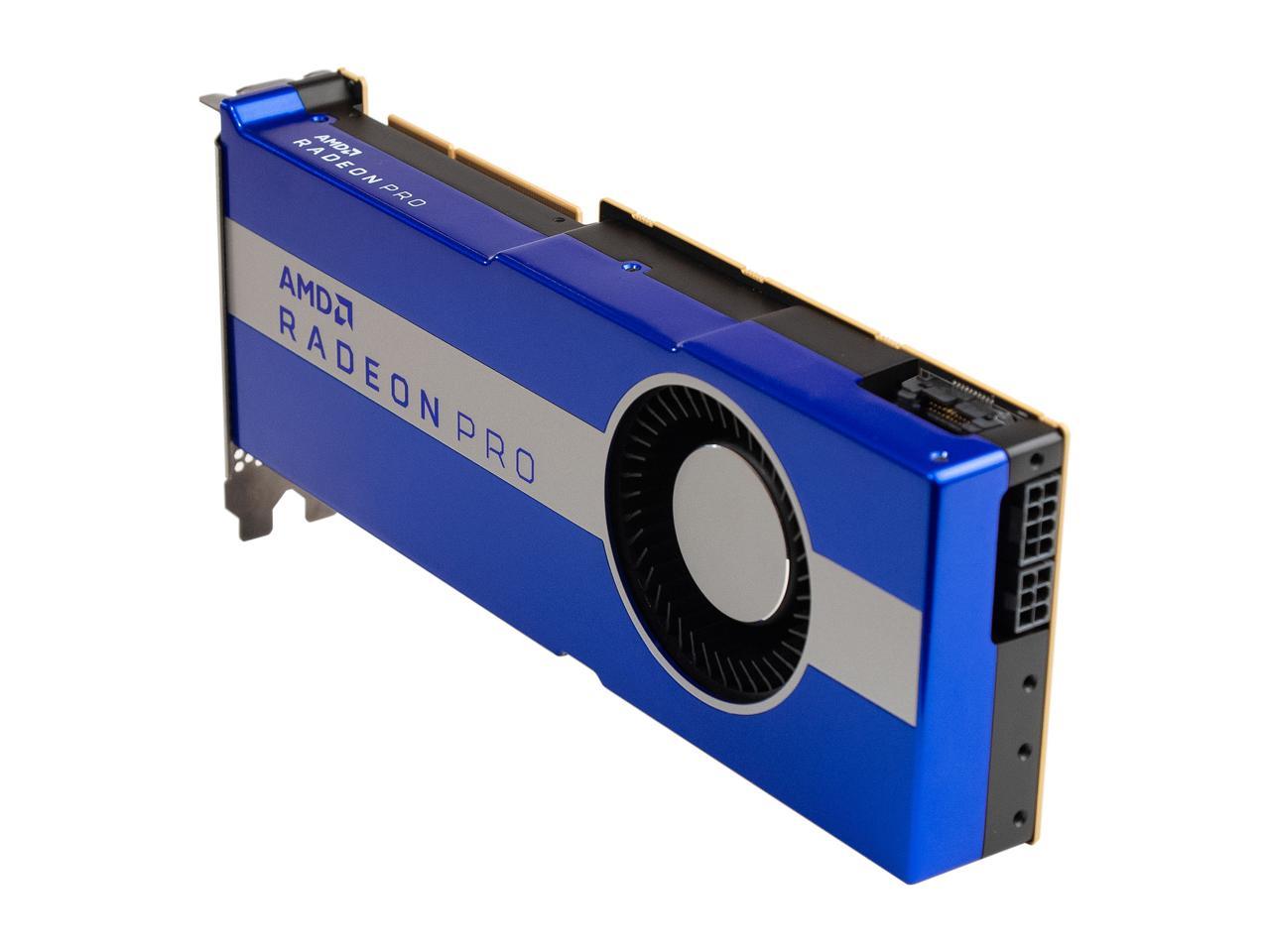

Amd radeon vii

#AMD RADEON VII PC#

AMD is clearly in a position to know what's to come from whatever we're calling the PlayStation 5 and Xbox One Point Two, so it's fair to assume that today's "ridiculous" amount of VRAM will probably seem quaint tomorrow.įor now, PC games aren't truly tapping into that aspect of the VII. Which is to say, a "pro" console-equivalent video card can operate in the 2-4GB range of VRAM.

#AMD RADEON VII PS4#

The current market of PC games is scaling up from consoles with a respectable amount of VRAM-though both Xbox One and PS4 share their total memory pool for both standard RAM and VRAM purposes. The problem with this logic is that it assumes PC game makers are developing games whose content scales most notably because of available VRAM-as opposed to games whose settings shrink and grow with clock speeds and SPs/CUDA cores in mind. For one, it lists the growing appetite for higher "recommended" VRAM footprints in games on an annual basis. Instead, the company offers a bevy of forward-thinking statements. What gives? AMD hasn't offered an in-depth response about how we should expect existing games to tap into the Radeon VII's impressive stats. If this review were nothing more than a contest of numbers, AMD would come out on top. In fact, the Radeon VII also beats the comparably priced RTX 2080 in pretty much every stat category, including stream processors (what Nvidia likes to call CUDA cores) and texture units. Those three stat points soundly surpass 2017's AMD RX Vega 64 card, not to mention anything Nvidia markets-though the RTX line favors GDDR6 memory. There's a lot to get excited about when reading the Radeon VII's stat sheet: its maximum boost clock of 1,800MHz, its 1TB/sec memory bandwidth, and its 16GB of what AMD calls "HBM2" memory, aka the second generation of its proprietary High-Bandwidth Memory (which AMD has previously described as a more power-efficient take on GDDR5). For now, AMD has to settle for merely clawing its way back to striking distance of its current $700 GPU rival. The AMD Radeon VII's architecture and design priorities offer an interesting peek at how the battle against Nvidia could heat up in the near future.

#AMD RADEON VII FULL#

Not if Nvidia's own cards, full of proprietary perks, still manage to meet or exceed the Radeon VII at roughly the same price point. An emphasis on more straight-up speed and power is better for everyone in the PC gaming space, right? AMD also insists that its memory bandwidth has been streamlined to make that VII-specific perk valuable for any 3D application. In the case of this week's AMD Radeon VII-which goes on sale today, February 7, for $699-that extra space is dedicated to a whopping 16GB VRAM, well above the 11GB maximum of any consumer-grade Nvidia product. As in, a tiny fabrication process that packs even more components onto a GPU's silicon for other hardware and features (the Radeon VII's HBM2 RAM shares die space with the GPU). Meanwhile, AMD finally pulled off a holy-grail number for its graphics cards: 7nm. But that big bet faltered, largely because only one truly RTX-compatible retail game currently exists, and Nvidia took the unusual step of warning investors about this fact. The catch was that these cards' new, proprietary cores were supposed to enable a few killer perks in higher-end graphics rendering. Its RTX line of cards essentially arrived with near-equivalent power as its prior generation for the same price (along with a new, staggering $1,200 card in its "consumer" line). Having established a serious lead with its 20 GTX graphics cards, Nvidia tried something completely different last year. But a confluence of recent events finally left AMD with a sizable opportunity in the market. In the world of computer graphics cards, AMD has been behind its only rival, Nvidia, for as long as we can remember. (Ars Technica may earn compensation for sales from links on this post through affiliate programs.)